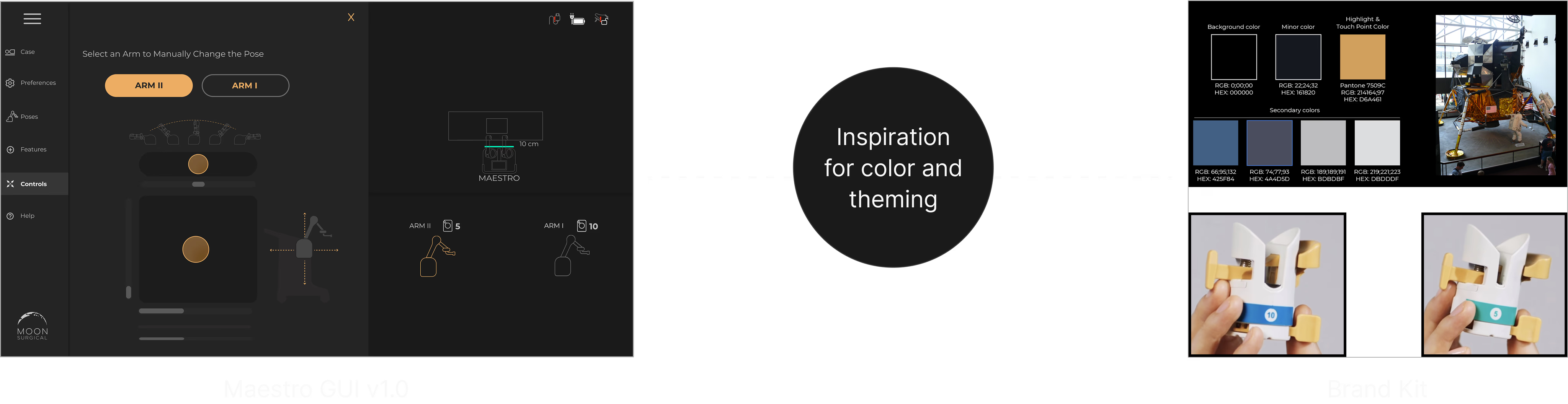

What Surgeons and Admins See

Maestro Insights has two faces: a surgeon dashboard for personal performance, and an admin dashboard for hospital-wide robot management. Both pull from the same data the robot collects during every procedure.

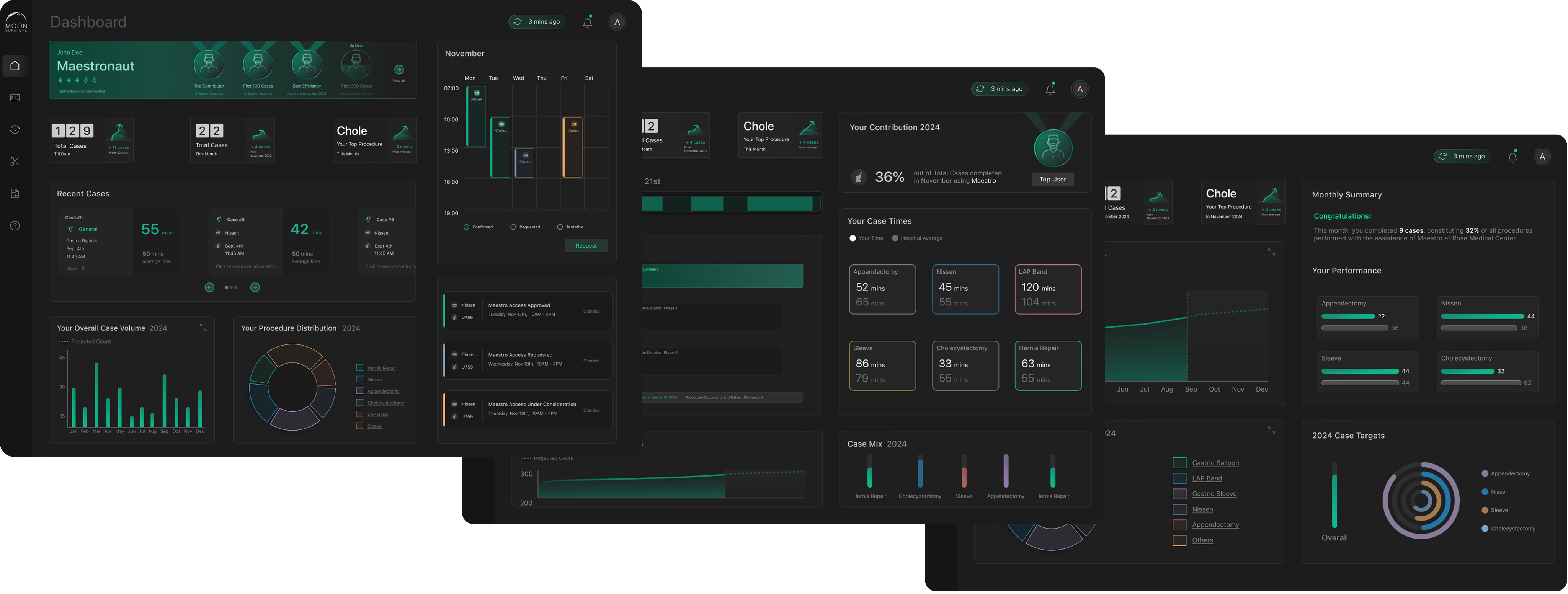

Trend Cards

The surgeon’s dashboard opens with trend cards that surface changes in their performance KPIs. No digging, no spreadsheets. If your start time improved or your case duration spiked, you see it immediately.

Distribution Charts

Interactive charts that show procedure volume over time. Surgeons track whether they’re hitting their targets, and the data updates after every case.

Recent Cases + AI Synopsis

Each case gets a detailed breakdown: timing, instruments used, staff, and notes. The AI synopsis generates a summary with actionable takeaways. No manual review needed.

Recent cases with timing, staff, and procedure details

Clicking into a case reveals the AI-generated synopsis — an LLM-written summary of what happened, what changed, and what to pay attention to next.

AI synopsis with performance analysis and actionable takeaways

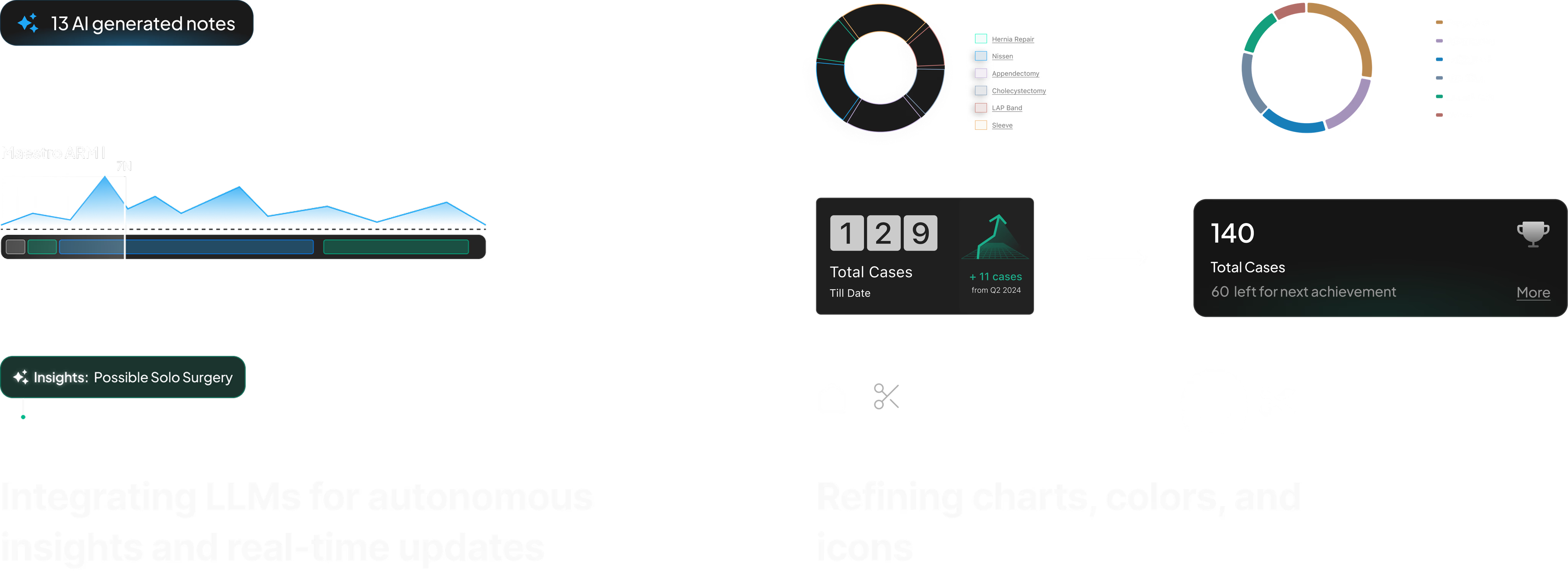

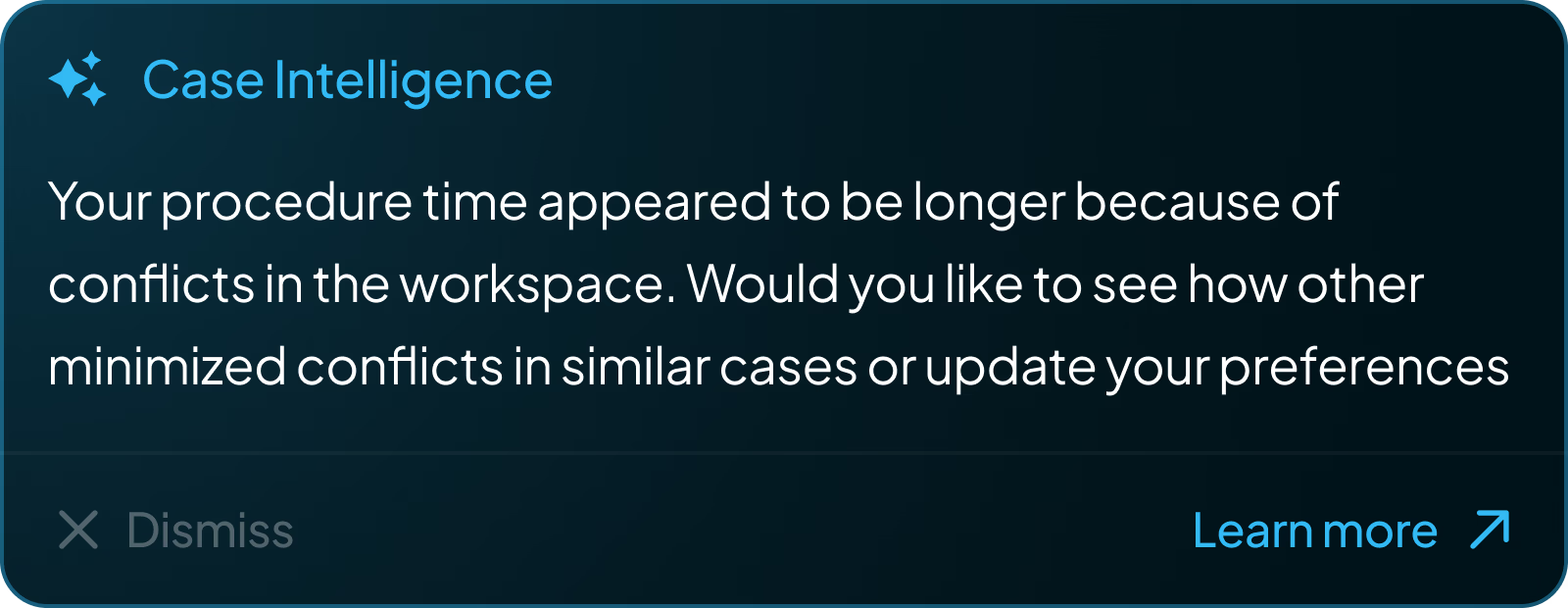

Every procedure generates hundreds of data points — arm force readings, instrument switches, phase transitions, timing deltas. This raw data flows through our processing pipeline and into an LLM with system prompts tuned to surface what surgeons care about: what changed from their baseline, what took longer than expected, and what to watch next time. I worked with our data scientist to define which metrics mattered and iterated on the prompt structure until the summaries matched the language surgeons actually use in post-op discussions.

Case Intelligence: proactive AI notifications surfaced after each procedure

Surgeons can’t type notes during a procedure. I designed a voice interface: say “Hey Maestro” and speak. There’s no screen UI for this — it’s an OR environment. Instead, the robot’s RGB strip pulses with an organic halo to show it’s listening. The glow responds to speech cadence and fades when done.

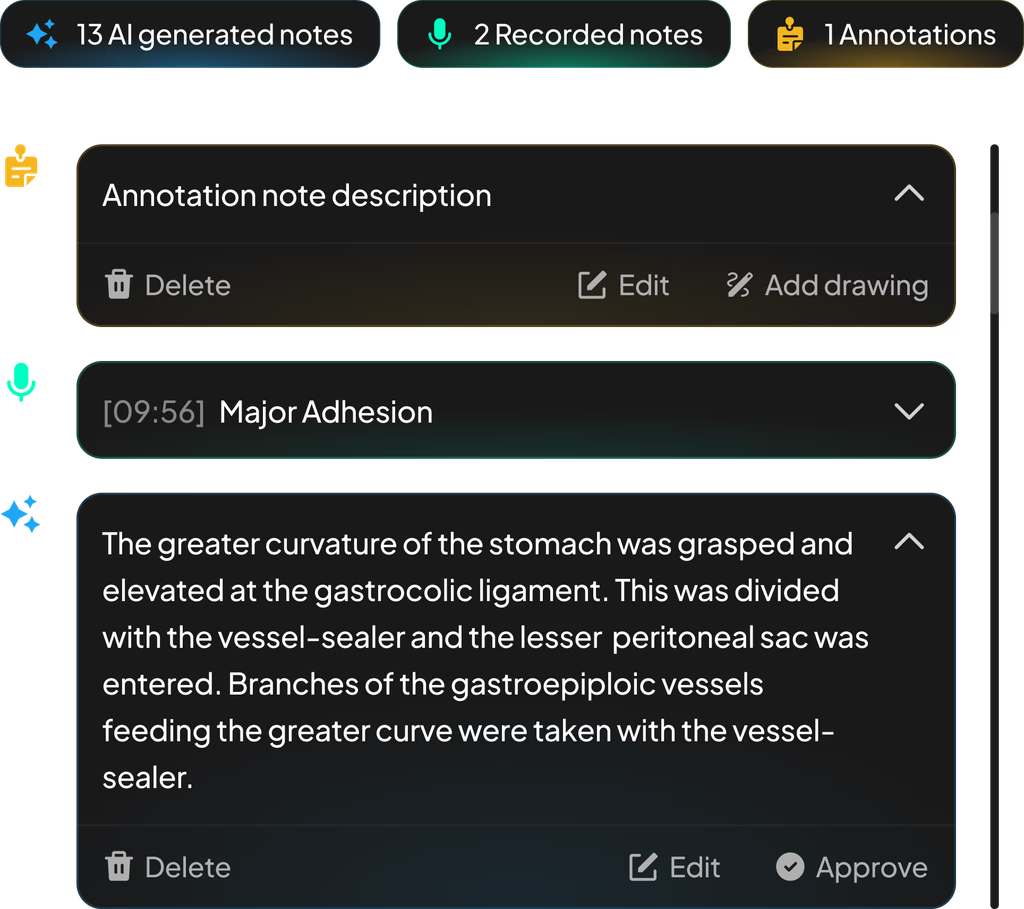

Once the voice is captured, the system processes it into structured notes. Surgeons can review AI-generated summaries, listen to recorded clips, and approve or edit annotations after the procedure. This approve/edit/delete flow was a deliberate safety-first decision — in a clinical environment, no AI-generated content should persist without surgeon verification.

AI notes, voice recordings, and annotations — captured hands-free during surgery

Admin Dashboard + OR Traffic Control

The admin side functions as a real-time observability layer for the entire robotics program. Administrators monitor site-wide performance — surgeon adoption, case volume, service line breakdown — and can drill into live OR activity as it happens.

Site-wide KPIs: surgeon adoption, case volume, and top service lines at a glance

OR Traffic Control is the real-time monitoring view. Each room shows live case status, timeline progress, staff allocation, and delay alerts — with a modal for drilling into active procedure events as they happen. The UI handles non-deterministic state: cases run long, rooms turn over unpredictably, and delays cascade.

OR Traffic Control with live case modal showing real-time procedure events